Artificial Intelligence is everywhere: chatbots that talk, models that paint, algorithms that diagnose. Yet, despite their astonishing competence, they remain profoundly narrow. They learn within walls we’ve built for them.

The dream of Artificial General Intelligence (AGI), a system that can learn, reason, and act across any domain, still eludes us. Not because of missing data or insufficient compute, but because of something subtler: a lack of balance.

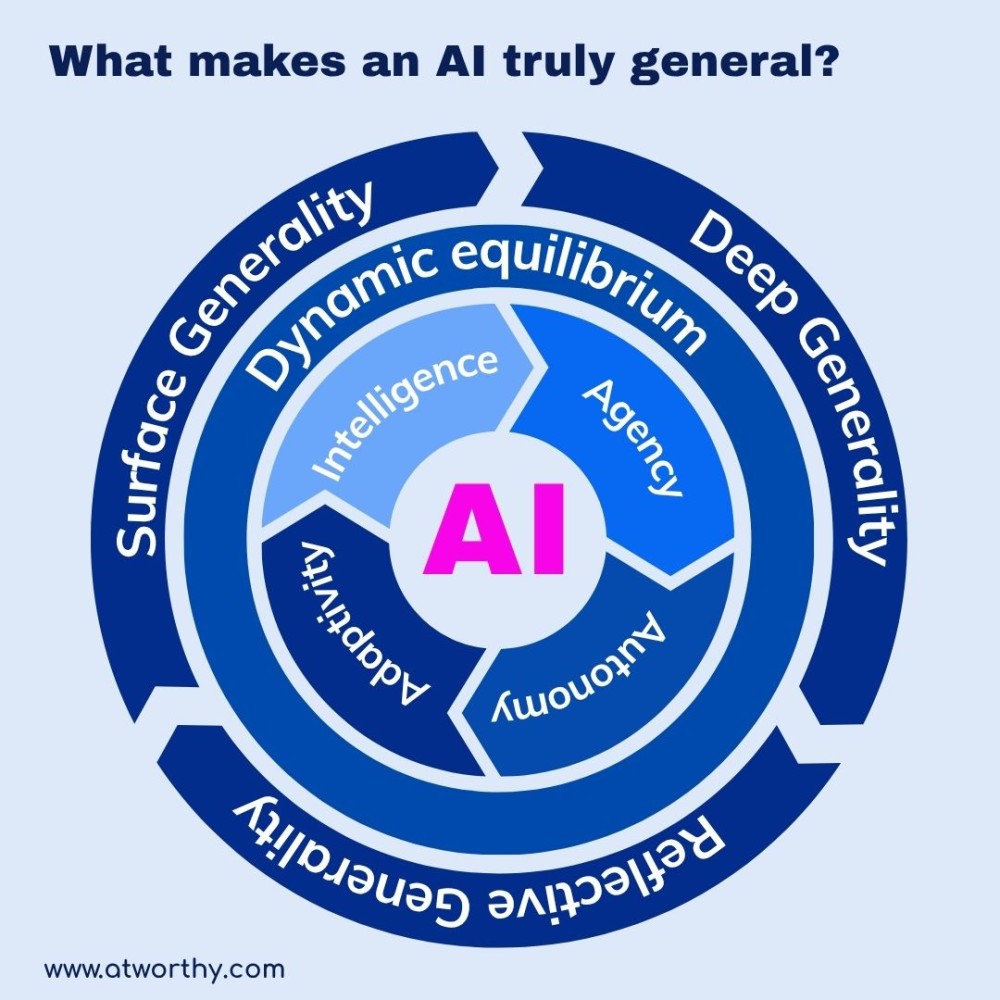

Generality doesn’t come from scale; it comes from dynamic equilibrium.

The Four Forces of Cognitive Balance

For an AI to approach generality, it must harmonize four interdependent forces: Intelligence, Agency, Autonomy, and Adaptivity.

These are not sequential steps of development but interlocking dynamics that must eventually stabilize together. Still, each has its own frontier, and humanity’s progress across them tells the story of where we truly stand.

1. Intelligence

Intelligence is the foundation: the ability to represent and reason about the world, to recognize patterns, predict outcomes, and build internal models of reality.

Our current AIs excel here. They translate languages, detect fraud, predict proteins, and compose symphonies. But what they lack is semantic grounding. They understand structure but not meaning, correlation without comprehension.

Where we are: Highly developed. We’ve achieved computational intelligence but not conceptual understanding.

2. Agency

Agency transforms cognition into consequence. It’s what allows systems to do something with their intelligence, to act on plans, execute code, or interact with the physical world.

This is where modern AI lives. Large models now compose emails, build apps, automate workflows, and negotiate tasks through APIs. They’re increasingly “doers,” not just “thinkers.”

Where we are: Firmly here. Today’s AI systems exhibit strong agency-acting intentionally within parameters we define. Their goals, however, are still borrowed from humans, not self-determined.

3. Autonomy

Autonomy is the power to act without constant human oversight, to interpret context, revise strategies, and sustain coherence over time.

True autonomy requires a system that can self-govern: weigh tradeoffs, assess its own behavior, and decide when to stop, adapt, or escalate. It’s not independence for its own sake, it’s responsible independence.

Where we are: At the threshold. We see glimpses in self-optimizing agents, and multi-agent systems that plan collaboratively. But their autonomy is conditional, sandboxes within safety rails. They act alone only inside rules we predefine.

4. Adaptivity

Adaptivity is intelligence’s most profound expression. It’s not just learning from data; it’s learning how to learn. It’s the ability to transfer insight from one domain to another, reformulate goals, and apply old knowledge to new frontiers.

Adaptivity is the force that turns static intelligence into living cognition.

Where we are: Barely beginning. Our models can fine-tune or retrain, but they cannot yet reinterpret experience or reimagine purpose. They learn patterns, not principles.

When these four forces reach dynamic equilibrium, something new emerges: Generality, the ability to think, act, and adapt across contexts with stability and self-consistency.

Humanity today stands between Agency and Autonomy. We have intelligent tools that act on command, but not yet autonomous minds that choose why and when to act. The next leap, into systems that balance freedom with understanding, will define whether AGI becomes a collaborator or a catalyst we fail to control.

The Three Degrees of Generality

Generality is not a switch to be flipped, but a continuum of emergence:

- Surface Generality: The ability to reuse patterns across similar tasks. Current large language models live here: skilled imitators of structure and style.

- Deep Generality: The capacity to transfer principles, not just patterns. It’s when an AI grasps why something works, allowing reasoning across domains.

- Reflective Generality: The rarest form, where the system can modify its own learning strategies and evolve its objectives. Here, intelligence becomes truly self-sustaining.

AGI is not the peak of a mountain; it’s the moment equilibrium becomes self-regulating.

Dynamic Equilibrium: The Missing Principle

Equilibrium doesn’t mean stillness; it means stability under change.

Living systems thrive by balancing contradictory pressures, exploration and control, independence and feedback, continuity and adaptation. A truly general AI will do the same: it won’t need perfect data or infinite parameters; it will need balance, an inner architecture capable of remaining itself while transforming.

This is why AGI is unlikely to appear from brute force alone. It will emerge when systems can preserve internal coherence while learning continuously, when the four forces of cognition sustain each other in motion.

The Circle of Generality

The framework below visualizes this principle: At the center lies AI, structured by Intelligence, Agency, Autonomy, and Adaptivity. Their interaction produces a Dynamic Equilibrium, from which Generality emerges in three expanding layers: Surface, Deep, and Reflective.

Generality, in this view, isn’t a milestone, it’s the byproduct of cognitive balance.

The Future of Worthy Intelligence

As AI systems evolve, we face a moral and architectural challenge: Do we design for domination, or for equilibrium?

True generality demands the latter. A worthy intelligence is not the most powerful, but the most balanced, curious without chaos, autonomous without arrogance, adaptive without instability.

If we succeed, AGI will not mark the end of artificial intelligence; it will mark the birth of adaptive intelligence: machines capable of evolving meaning alongside us.